ChatGPT Will get Its “Wolfram Superpowers”!—Stephen Wolfram Writings

[ad_1]

To allow the performance described right here, choose and set up the Wolfram plugin from inside ChatGPT.

Word that this functionality is thus far accessible solely to some ChatGPT Plus customers; for extra data, see OpenAI’s announcement.

In Simply Two and a Half Months…

Early in January I wrote about the potential of connecting ChatGPT to Wolfram|Alpha. And at present—simply two and a half months later—I’m excited to announce that it’s occurred! Due to some heroic software program engineering by our group and by OpenAI, ChatGPT can now name on Wolfram|Alpha—and Wolfram Language as properly—to offer it what we’d consider as “computational superpowers”. It’s nonetheless very early days for all of this, but it surely’s already very spectacular—and one can start to see how amazingly highly effective (and even perhaps revolutionary) what we are able to name “

Again in January, I made the purpose that, as an LLM neural internet, ChatGPT—for all its exceptional prowess in textually producing materials “like” what it’s learn from the net, and so forth.—can’t itself be anticipated to do precise nontrivial computations, or to systematically produce appropriate (slightly than simply “appears roughly proper”) information, and so forth. However when it’s related to the Wolfram plugin it may well do these items. So right here’s my (quite simple) first instance from January, however now finished by ChatGPT with “Wolfram superpowers” put in:

It’s an accurate outcome (which in January it wasn’t)—discovered by precise computation. And right here’s a bonus: instant visualization:

How did this work? Beneath the hood, ChatGPT is formulating a question for Wolfram|Alpha—then sending it to Wolfram|Alpha for computation, after which “deciding what to say” based mostly on studying the outcomes it acquired again. You possibly can see this backwards and forwards by clicking the “Used Wolfram” field (and by taking a look at this you may verify that ChatGPT didn’t “make something up”):

There are many nontrivial issues occurring right here, on each the ChatGPT and Wolfram|Alpha sides. However the upshot is an effective, appropriate outcome, knitted into a pleasant, flowing piece of textual content.

Let’s attempt one other instance, additionally from what I wrote in January:

A positive outcome, worthy of our expertise. And once more, we are able to get a bonus:

In January, I famous that ChatGPT ended up simply “making up” believable (however flawed) information when given this immediate:

However now it calls the Wolfram plugin and will get a good, authoritative reply. And, as a bonus, we are able to additionally make a visualization:

One other instance from again in January that now comes out accurately is:

When you truly attempt these examples, don’t be stunned in the event that they work in a different way (generally higher, generally worse) from what I’m displaying right here. Since ChatGPT makes use of randomness in producing its responses, various things can occur even if you ask it the very same query (even in a recent session). It feels “very human”. However totally different from the strong “right-answer-and-it-doesn’t-change-if-you-ask-it-again” expertise that one will get in Wolfram|Alpha and Wolfram Language.

Right here’s an instance the place we noticed ChatGPT (slightly impressively) “having a dialog” with the Wolfram plugin, after at first discovering out that it acquired the “flawed Mercury”:

One significantly important factor right here is that ChatGPT isn’t simply utilizing us to do a “dead-end” operation like present the content material of a webpage. Reasonably, we’re appearing far more like a real “mind implant” for ChatGPT—the place it asks us issues each time it must, and we give responses that it may well weave again into no matter it’s doing. It’s slightly spectacular to see in motion. And—though there’s undoubtedly far more sharpening to be finished—what’s already there goes a good distance in the direction of (amongst different issues) giving ChatGPT the flexibility to ship correct, curated information and information—in addition to appropriate, nontrivial computations.

However there’s extra too. We already noticed examples the place we have been in a position to present custom-created visualizations to ChatGPT. And with our computation capabilities we’re routinely in a position to make “really authentic” content material—computations which have merely by no means been finished earlier than. And there’s one thing else: whereas “pure ChatGPT” is restricted to issues it “realized throughout its coaching”, by calling us it may well get up-to-the-moment information.

This may be based mostly on our real-time information feeds (right here we’re getting referred to as twice; as soon as for every place):

Or it may be based mostly on “science-style” predictive computations:

Or each:

A few of the Issues You Can Do

There’s quite a bit that Wolfram|Alpha and Wolfram Language cowl:

And now (virtually) all of that is accessible to ChatGPT—opening up an amazing breadth and depth of latest potentialities. And to offer some sense of those, listed here are a couple of (easy) examples:

Algorithms

Algorithms Audio

Audio Forex conversion

Forex conversion Operate plotting

Operate plotting Family tree

Family tree Geo information

Geo information Mathematical features

Mathematical features Music

Music Pokémon

Pokémon

A Fashionable Human + AI Workflow

ChatGPT is constructed to have the ability to have back-and-forth dialog with people. However what can one do when that dialog has precise computation and computational information in it? Right here’s an instance. Begin by asking a “world information” query:

And, sure, by “opening the field” one can verify that the fitting query was requested to us, and what the uncooked response we gave was. However now we are able to go on and ask for a map:

However there are “prettier” map projections we may have used. And with ChatGPT’s “basic information” based mostly on its studying of the net, and so forth. we are able to simply ask it to make use of one:

However possibly we would like a warmth map as an alternative. Once more, we are able to simply ask it to supply this—beneath utilizing our expertise:

Let’s change the projection once more, now asking it once more to select it utilizing its “basic information”:

And, sure, it acquired the projection “proper”. However not the centering. So let’s ask it to repair that:

OK, so what do now we have right here? We’ve acquired one thing that we “collaborated” to construct. We incrementally stated what we needed; the AI (i.e.

If we copy the code out right into a Wolfram Pocket book, we are able to instantly run it, and we discover it has a pleasant “luxurious function”—as ChatGPT claimed in its description, there are dynamic tooltips giving the identify of every nation:

(And, sure, it’s a slight pity that this code simply has specific numbers in it, slightly than the unique symbolic question about beef manufacturing. And this occurred as a result of ChatGPT requested the unique query to Wolfram|Alpha, then fed the outcomes to Wolfram Language. However I think about the truth that this complete sequence works in any respect extraordinarily spectacular.)

How It Works—and Wrangling the AI

What’s taking place “beneath the hood” with ChatGPT and the Wolfram plugin? Keep in mind that the core of ChatGPT is a “massive language mannequin” (LLM) that’s educated from the net, and so forth. to generate a “cheap continuation” from any textual content it’s given. However as a remaining a part of its coaching ChatGPT can be taught the right way to “maintain conversations”, and when to “ask one thing to another person”—the place that “somebody” is perhaps a human, or, for that matter, a plugin. And particularly, it’s been taught when to achieve out to the Wolfram plugin.

The Wolfram plugin truly has two entry factors: a Wolfram|Alpha one and a Wolfram Language one. The Wolfram|Alpha one is in a way the “simpler” for ChatGPT to take care of; the Wolfram Language one is finally the extra highly effective. The explanation the Wolfram|Alpha one is simpler is that what it takes as enter is simply pure language—which is precisely what ChatGPT routinely offers with. And, greater than that, Wolfram|Alpha is constructed to be forgiving—and in impact to take care of “typical human-like enter”, kind of nonetheless messy which may be.

Wolfram Language, however, is ready as much as be exact and properly outlined—and able to getting used to construct arbitrarily refined towers of computation. Inside Wolfram|Alpha, what it’s doing is to translate pure language to express Wolfram Language. In impact it’s catching the “imprecise pure language” and “funneling it” into exact Wolfram Language.

When ChatGPT calls the Wolfram plugin it usually simply feeds pure language to Wolfram|Alpha. However ChatGPT has by this level realized a specific amount about writing Wolfram Language itself. And ultimately, as we’ll talk about later, that’s a extra versatile and highly effective strategy to talk. But it surely doesn’t work except the Wolfram Language code is precisely proper. To get it to that time is partly a matter of coaching. However there’s one other factor too: given some candidate code, the Wolfram plugin can run it, and if the outcomes are clearly flawed (like they generate a lot of errors), ChatGPT can try to repair it, and check out working it once more. (Extra elaborately, ChatGPT can attempt to generate exams to run, and alter the code in the event that they fail.)

There’s extra to be developed right here, however already one generally sees ChatGPT shuttle a number of occasions. It is perhaps rewriting its Wolfram|Alpha question (say simplifying it by taking out irrelevant elements), or it is perhaps deciding to change between Wolfram|Alpha and Wolfram Language, or it is perhaps rewriting its Wolfram Language code. Telling it the right way to do these items is a matter for the preliminary “plugin immediate”.

And penning this immediate is an odd exercise—maybe our first critical expertise of attempting to “talk with an alien intelligence”. In fact it helps that the “alien intelligence” has been educated with an enormous corpus of human-written textual content. So, for instance, it is aware of English (a bit like all these corny science fiction aliens…). And we are able to inform it issues like “If the person enter is in a language aside from English, translate to English and ship an applicable question to Wolfram|Alpha, then present your response within the language of the unique enter.”

Typically we’ve discovered now we have to be fairly insistent (notice the all caps): “When writing Wolfram Language code, NEVER use snake case for variable names; ALWAYS use camel case for variable names.” And even with that insistence, ChatGPT will nonetheless generally do the flawed factor. The entire means of “immediate engineering” feels a bit like animal wrangling: you’re attempting to get ChatGPT to do what you need, but it surely’s laborious to know simply what it is going to take to realize that.

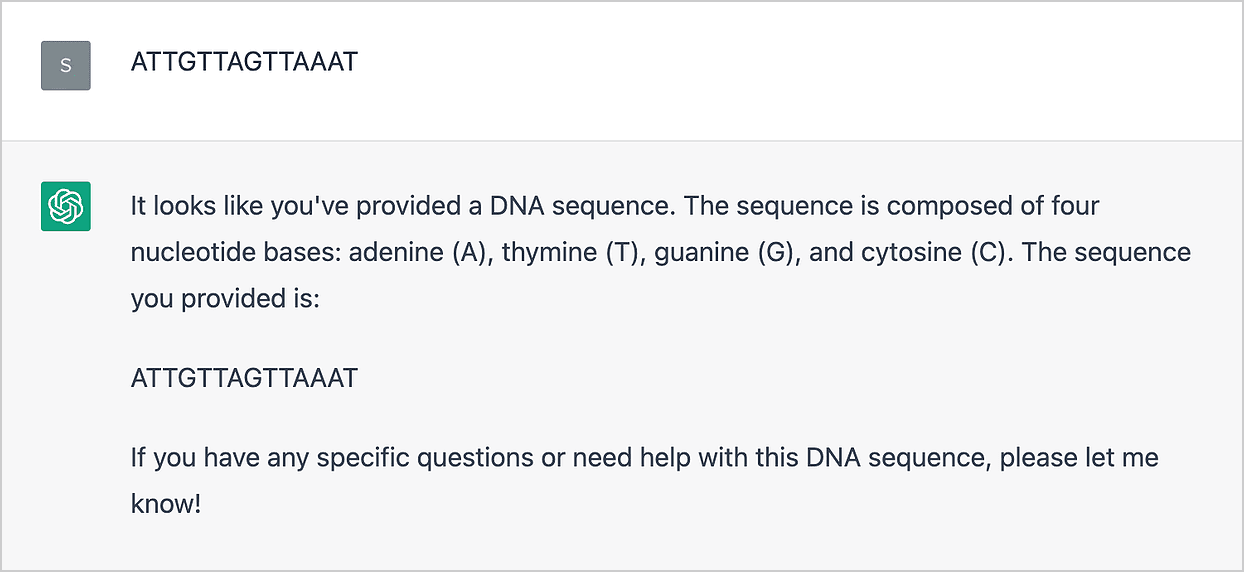

Finally this may presumably be dealt with in coaching or within the immediate, however as of proper now, ChatGPT generally doesn’t know when the Wolfram plugin will help. For instance, ChatGPT guesses that that is speculated to be a DNA sequence, however (a minimum of on this session) doesn’t instantly assume the Wolfram plugin can do something with it:

Say “Use Wolfram”, although, and it’ll ship it to the Wolfram plugin, which certainly handles it properly:

(You could generally additionally need to say particularly “Use Wolfram|Alpha” or “Use Wolfram Language”. And significantly within the Wolfram Language case, it’s possible you’ll need to take a look at the precise code it despatched, and inform it issues like to not use features whose names it got here up with, however which don’t truly exist.)

When the Wolfram plugin is given Wolfram Language code, what it does is mainly simply to guage that code, and return the outcome—maybe as a graphic or math formulation, or simply textual content. However when it’s given Wolfram|Alpha enter, that is despatched to a particular Wolfram|Alpha “for LLMs” API endpoint, and the outcome comes again as textual content meant to be “learn” by ChatGPT, and successfully used as a further immediate for additional textual content ChatGPT is writing. Check out this instance:

The result’s a pleasant piece of textual content containing the reply to the query requested, together with another data ChatGPT determined to incorporate. However “inside” we are able to see what the Wolfram plugin (and the Wolfram|Alpha “LLM endpoint”) truly did:

There’s fairly a little bit of extra data there (together with some good photos!). However ChatGPT “determined” simply to pick a couple of items to incorporate in its response.

By the way in which, one thing to emphasise is that if you wish to be certain you’re getting what you assume you’re getting, at all times verify what ChatGPT truly despatched to the Wolfram plugin—and what the plugin returned. One of many vital issues we’re including with the Wolfram plugin is a strategy to “factify” ChatGPT output—and to know when ChatGPT is “utilizing its creativeness”, and when it’s delivering strong info.

Typically in attempting to know what’s occurring it’ll even be helpful simply to take what the Wolfram plugin was despatched, and enter it as direct enter on the Wolfram|Alpha web site, or in a Wolfram Language system (such because the Wolfram Cloud).

Wolfram Language because the Language for Human-AI Collaboration

One of many nice (and, frankly, surprising) issues about ChatGPT is its capability to start out from a tough description, and generate from it a sophisticated, completed output—similar to an essay, letter, authorized doc, and so forth. Prior to now, one may need tried to realize this “by hand” by beginning with “boilerplate” items, then modifying them, “gluing” them collectively, and so forth. However ChatGPT has all however made this course of out of date. In impact, it’s “absorbed” an enormous vary of boilerplate from what it’s “learn” on the internet, and so forth.—and now it sometimes does a superb job at seamlessly “adapting it” to what you want.

So what about code? In conventional programming languages writing code tends to contain numerous “boilerplate work”—and in follow many programmers in such languages spend a lot of their time increase their packages by copying huge slabs of code from the net. However now, out of the blue, it appears as if ChatGPT could make a lot of this out of date. As a result of it may well successfully put collectively basically any form of boilerplate code mechanically—with solely somewhat “human enter”.

In fact, there must be some human enter—as a result of in any other case ChatGPT wouldn’t know what program it was supposed to write down. However—one would possibly surprise—why does there should be “boilerplate” in code in any respect? Shouldn’t one be capable of have a language the place—simply on the degree of the language itself—all that’s wanted is a small quantity of human enter, with none of the “boilerplate dressing”?

Nicely, right here’s the problem. Conventional programming languages are centered round telling a pc what to do within the pc’s phrases: set this variable, take a look at that situation, and so forth. But it surely doesn’t should be that approach. And as an alternative one can begin from the opposite finish: take issues folks naturally assume when it comes to, then attempt to signify these computationally—and successfully automate the method of getting them truly carried out on a pc.

Nicely, that is what I’ve now spent greater than 4 a long time engaged on. And it’s the muse of what’s now Wolfram Language—which I now really feel justified in calling a “full-scale computational language”. What does this imply? It implies that proper within the language there’s a computational illustration for each summary and actual issues that we speak about on the earth, whether or not these are graphs or photographs or differential equations—or cities or chemical compounds or corporations or motion pictures.

Why not simply begin with pure language? Nicely, that works up to some extent—because the success of Wolfram|Alpha demonstrates. However as soon as one’s attempting to specify one thing extra elaborate, pure language turns into (like “legalese”) at greatest unwieldy—and one actually wants a extra structured strategy to categorical oneself.

There’s an enormous instance of this traditionally, in arithmetic. Again earlier than about 500 years in the past, just about the one strategy to “categorical math” was in pure language. However then mathematical notation was invented, and math took off—with the event of algebra, calculus, and finally all the assorted mathematical sciences.

My huge aim with the Wolfram Language is to create a computational language that may do the identical form of factor for something that may be “expressed computationally”. And to realize this we’ve wanted to construct a language that each mechanically does numerous issues, and intrinsically is aware of numerous issues. However the result’s a language that’s arrange so that folks can conveniently “categorical themselves computationally”, a lot as conventional mathematical notation lets them “categorical themselves mathematically”. And a vital level is that—in contrast to conventional programming languages—Wolfram Language is meant not only for computer systems, but in addition for people, to learn. In different phrases, it’s meant as a structured approach of “speaking computational concepts”, not simply to computer systems, but in addition to people.

However now—with ChatGPT—this out of the blue turns into much more vital than ever earlier than. As a result of—as we started to see above—ChatGPT can work with Wolfram Language, in a way increase computational concepts simply utilizing pure language. And a part of what’s then vital is that Wolfram Language can straight signify the sorts of issues we need to speak about. However what’s additionally vital is that it offers us a strategy to “know what now we have”—as a result of we are able to realistically and economically learn Wolfram Language code that ChatGPT has generated.

The entire thing is starting to work very properly with the Wolfram plugin in ChatGPT. Right here’s a easy instance, the place ChatGPT can readily generate a Wolfram Language model of what it’s being requested:

And the vital level is that the “code” is one thing one can realistically count on to learn (if I have been writing it, I’d use the marginally extra compact RomanNumeral operate):

Right here’s one other instance:

I may need written the code somewhat in a different way, however that is once more one thing very readable:

It’s usually potential to make use of a pidgin of Wolfram Language and English to say what you need:

Right here’s an instance the place ChatGPT is once more efficiently setting up Wolfram Language—and conveniently exhibits it to us so we are able to verify that, sure, it’s truly computing the fitting factor:

And, by the way in which, to make this work it’s vital that the Wolfram Language is in a way “self-contained”. This piece of code is simply normal generic Wolfram Language code; it doesn’t rely upon something exterior, and in case you needed to, you possibly can search for the definitions of every thing that seems in it within the Wolfram Language documentation.

OK, another instance:

Clearly ChatGPT had hassle right here. However—because it instructed—we are able to simply run the code it generated, straight in a pocket book. And since Wolfram Language is symbolic, we are able to explicitly see outcomes at every step:

So shut! Let’s assist it a bit, telling it we want an precise listing of European international locations:

And there’s the outcome! Or a minimum of, a outcome. As a result of after we take a look at this computation, it may not be fairly what we would like. For instance, we’d need to select a number of dominant colours per nation, and see if any of them are near purple. However the entire Wolfram Language setup right here makes it straightforward for us to “collaborate with the AI” to determine what we would like, and what to do.

Thus far we’ve mainly been beginning with pure language, and increase Wolfram Language code. However we are able to additionally begin with pseudocode, or code in some low-level programming language. And ChatGPT tends to do a remarkably good job of taking such issues and producing well-written Wolfram Language code from them. The code isn’t at all times precisely proper. However one can at all times run it (e.g. with the Wolfram plugin) and see what it does, doubtlessly (courtesy of the symbolic character of Wolfram Language) line by line. And the purpose is that the high-level computational language nature of the Wolfram Language tends to permit the code to be sufficiently clear and (a minimum of domestically) easy that (significantly after seeing it run) one can readily perceive what it’s doing—after which doubtlessly iterate backwards and forwards on it with the AI.

When what one’s attempting to do is sufficiently easy, it’s usually lifelike to specify it—a minimum of if one does it in phases—purely with pure language, utilizing Wolfram Language “simply” as a strategy to see what one’s acquired, and to truly be capable of run it. But it surely’s when issues get extra difficult that Wolfram Language actually comes into its personal—offering what’s mainly the one viable human-understandable-yet-precise illustration of what one desires.

And once I was writing my guide An Elementary Introduction to the Wolfram Language this grew to become significantly apparent. In the beginning of the guide I used to be simply in a position to make up workout routines the place I described what was needed in English. However as issues began getting extra difficult, this grew to become increasingly more troublesome. As a “fluent” person of Wolfram Language I often instantly knew the right way to categorical what I needed in Wolfram Language. However to explain it purely in English required one thing more and more concerned and sophisticated, that learn like legalese.

However, OK, so that you specify one thing utilizing Wolfram Language. Then one of many exceptional issues ChatGPT is commonly in a position to do is to recast your Wolfram Language code in order that it’s simpler to learn. It doesn’t (but) at all times get it proper. But it surely’s fascinating to see it make totally different tradeoffs from a human author of Wolfram Language code. For instance, people have a tendency to search out it troublesome to give you good names for issues, making it often higher (or a minimum of much less complicated) to keep away from names by having sequences of nested features. However ChatGPT, with its command of language and that means, has a reasonably straightforward time making up cheap names. And though it’s one thing I, for one, didn’t count on, I feel utilizing these names, and “spreading out the motion”, can usually make Wolfram Language code even simpler to learn than it was earlier than, and certainly learn very very similar to a formalized analog of pure language—that we are able to perceive as simply as pure language, however that has a exact that means, and may truly be run to generate computational outcomes.

Cracking Some Previous Chestnuts

When you “know what computation you need to do”, and you may describe it in a brief piece of pure language, then Wolfram|Alpha is ready as much as straight do the computation, and current the leads to a approach that’s “visually absorbable” as simply as potential. However what if you wish to describe the end in a story, textual essay? Wolfram|Alpha has by no means been arrange to try this. However ChatGPT is.

Right here’s a outcome from Wolfram|Alpha:

And right here inside ChatGPT we’re asking for this identical Wolfram|Alpha outcome, however then telling ChatGPT to “make an essay out of it”:

One other “previous chestnut” for Wolfram|Alpha is math phrase issues. Given a “crisply introduced” math drawback, Wolfram|Alpha is more likely to do very properly at fixing it. However what a few “woolly” phrase drawback? Nicely, ChatGPT is fairly good at “unraveling” such issues, and turning them into “crisp math questions”—which then the Wolfram plugin can now resolve. Right here’s an instance:

Right here’s a barely extra difficult case, together with a pleasant use of “frequent sense” to acknowledge that the variety of turkeys can’t be damaging:

Past math phrase issues, one other “previous chestnut” now addressed by

The right way to Get Concerned

So how will you become involved in what guarantees to be an thrilling interval of fast technological—and conceptual—development? The very first thing is simply to discover

Discover examples. Share them. Attempt to establish profitable patterns of utilization. And, most of all, attempt to discover workflows that ship the very best worth. These workflows may very well be fairly elaborate. However they is also fairly easy—instances the place as soon as one sees what could be finished, there’s a direct “aha”.

How will you greatest implement a workflow? Nicely, we’re attempting to work out the very best workflows for that. Inside Wolfram Language we’re establishing versatile methods to name on issues like ChatGPT, each purely programmatically, and within the context of the pocket book interface.

However what about from the ChatGPT aspect? Wolfram Language has a really open structure, the place a person can add or modify just about no matter they need. However how will you use this from ChatGPT? One factor is simply to inform ChatGPT to incorporate some particular piece of “preliminary” Wolfram Language code (possibly along with documentation)—then use one thing just like the pidgin above to speak to ChatGPT in regards to the features or different stuff you’ve outlined in that preliminary code.

We’re planning to construct more and more streamlined instruments for dealing with and sharing Wolfram Language code to be used by ChatGPT. However one method that already works is to submit features for publication within the Wolfram Operate Repository, then—as soon as they’re printed—refer to those features in your dialog with ChatGPT.

OK, however what about inside ChatGPT itself? What sort of immediate engineering do you have to do to greatest work together with the Wolfram plugin? Nicely, we don’t know but. It’s one thing that must be explored—in impact as an train in AI schooling or AI psychology. A typical method is to offer some “pre-prompts” earlier in your ChatGPT session, then hope it’s “nonetheless paying consideration” to these in a while. (And, sure, it has a restricted “consideration span”, so generally issues should get repeated.)

We’ve tried to offer an general immediate to inform ChatGPT mainly the right way to use the Wolfram plugin—and we totally count on this immediate to evolve quickly, as we study extra, and because the ChatGPT LLM is up to date. However you may add your individual basic pre-prompts, saying issues like “When utilizing Wolfram at all times attempt to embody an image” or “Use SI models” or “Keep away from utilizing advanced numbers if potential”.

You can even attempt establishing a pre-prompt that basically “defines a operate” proper in ChatGPT—one thing like: “If I provide you with an enter consisting of a quantity, you might be to make use of Wolfram to attract a polygon with that variety of sides”. Or, extra straight, “If I provide you with an enter consisting of numbers you might be to use the next Wolfram operate to that enter …”, then give some specific Wolfram Language code.

However these are very early days, and little doubt there’ll be different highly effective mechanisms found for “programming”

Some Background & Outlook

Even per week in the past it wasn’t clear what

ChatGPT is mainly a really massive neural community, educated to comply with the “statistical” patterns of textual content it’s seen on the internet, and so forth. The idea of neural networks—in a kind surprisingly near what’s utilized in ChatGPT—originated all the way in which again within the Forties. However after some enthusiasm within the Nineteen Fifties, curiosity waned. There was a resurgence within the early Eighties (and certainly I personally first checked out neural nets then). But it surely wasn’t till 2012 that critical pleasure started to construct about what is perhaps potential with neural nets. And now a decade later—in a growth whose success got here as an enormous shock even to these concerned—now we have ChatGPT.

Reasonably separate from the “statistical” custom of neural nets is the “symbolic” custom for AI. And in a way that custom arose as an extension of the method of formalization developed for arithmetic (and mathematical logic), significantly close to the start of the 20 th century. However what was vital about it was that it aligned properly not solely with summary ideas of computation, but in addition with precise digital computer systems of the sort that began to seem within the Nineteen Fifties.

The successes in what may actually be thought of “AI” have been for a very long time at greatest spotty. However all of the whereas, the overall idea of computation was displaying large and rising success. However how would possibly “computation” be associated to methods folks take into consideration issues? For me, an important growth was my thought in the beginning of the Eighties (constructing on earlier formalism from mathematical logic) that transformation guidelines for symbolic expressions is perhaps a great way to signify computations at what quantities to a “human” degree.

On the time my fundamental focus was on mathematical and technical computation, however I quickly started to wonder if comparable concepts is perhaps relevant to “basic AI”. I suspected one thing like neural nets may need a job to play, however on the time I solely discovered a bit about what can be wanted—and never the right way to obtain it. In the meantime, the core thought of transformation guidelines for symbolic expressions grew to become the muse for what’s now the Wolfram Language—and made potential the decades-long means of growing the full-scale computational language that now we have at present.

Beginning within the Nineteen Sixties there’d been efforts amongst AI researchers to develop programs that would “perceive pure language”, and “signify information” and reply questions from it. A few of what was finished became much less bold however sensible functions. However usually success was elusive. In the meantime, because of what amounted to a philosophical conclusion of primary science I’d finished within the Nineties, I made a decision round 2005 to make an try to construct a basic “computational information engine” that would broadly reply factual and computational questions posed in pure language. It wasn’t apparent that such a system may very well be constructed, however we found that—with our underlying computational language, and with numerous work—it may. And in 2009 we have been in a position to launch Wolfram|Alpha.

And in a way what made Wolfram|Alpha potential was that internally it had a transparent, formal strategy to signify issues on the earth, and to compute about them. For us, “understanding pure language” wasn’t one thing summary; it was the concrete means of translating pure language to structured computational language.

One other half was assembling all the information, strategies, fashions and algorithms wanted to “find out about” and “compute about” the world. And whereas we’ve tremendously automated this, we’ve nonetheless at all times discovered that to finally “get issues proper” there’s no alternative however to have precise human consultants concerned. And whereas there’s somewhat of what one would possibly consider as “statistical AI” within the pure language understanding system of Wolfram|Alpha, the overwhelming majority of Wolfram|Alpha—and Wolfram Language—operates in a tough, symbolic approach that’s a minimum of harking back to the custom of symbolic AI. (That’s to not say that particular person features in Wolfram Language don’t use machine studying and statistical strategies; lately increasingly more do, and the Wolfram Language additionally has an entire built-in framework for doing machine studying.)

As I’ve mentioned elsewhere, what appears to have emerged is that “statistical AI”, and significantly neural nets, are properly suited to duties that we people “do shortly”, together with—as we study from ChatGPT—pure language and the “pondering” that underlies it. However the symbolic and in a way “extra rigidly computational” method is what’s wanted when one’s constructing bigger “conceptual” or computational “towers”—which is what occurs in math, precise science, and now all of the “computational X” fields.

And now

Once we have been first constructing Wolfram|Alpha we thought that maybe to get helpful outcomes we’d haven’t any alternative however to interact in a dialog with the person. However we found that if we instantly generated wealthy, “visually scannable” outcomes, we solely wanted a easy “Assumptions” or “Parameters” interplay—a minimum of for the form of data and computation looking for we anticipated of our customers. (In Wolfram|Alpha Pocket book Version we nonetheless have a strong instance of how multistep computation could be finished with pure language.)

Again in 2010 we have been already experimenting with producing not simply the Wolfram Language code of typical Wolfram|Alpha queries from pure language, but in addition “complete packages”. On the time, nonetheless—with out fashionable LLM expertise—that didn’t get all that far. However what we found was that—within the context of the symbolic construction of the Wolfram Language—even having small fragments of what quantities to code be generated by pure language was extraordinarily helpful. And certainly I, for instance, use the ctrl= mechanism in Wolfram Notebooks numerous occasions virtually each day, for instance to assemble symbolic entities or portions from pure language. We don’t but know fairly what the fashionable “LLM-enabled” model of this will likely be, but it surely’s more likely to contain the wealthy human-AI “collaboration” that we mentioned above, and that we are able to start to see in motion for the primary time in

I see what’s taking place now as a historic second. For properly over half a century the statistical and symbolic approaches to what we’d name “AI” advanced largely individually. However now, in

[ad_2]