Efficiency Capability of a Complicated Neural Community

[ad_1]

• Physics 16, 108

A brand new idea permits researchers to find out the flexibility of arbitrarily complicated neural networks to carry out recognition duties on information with intricate construction.

solvod/inventory.adobe.com

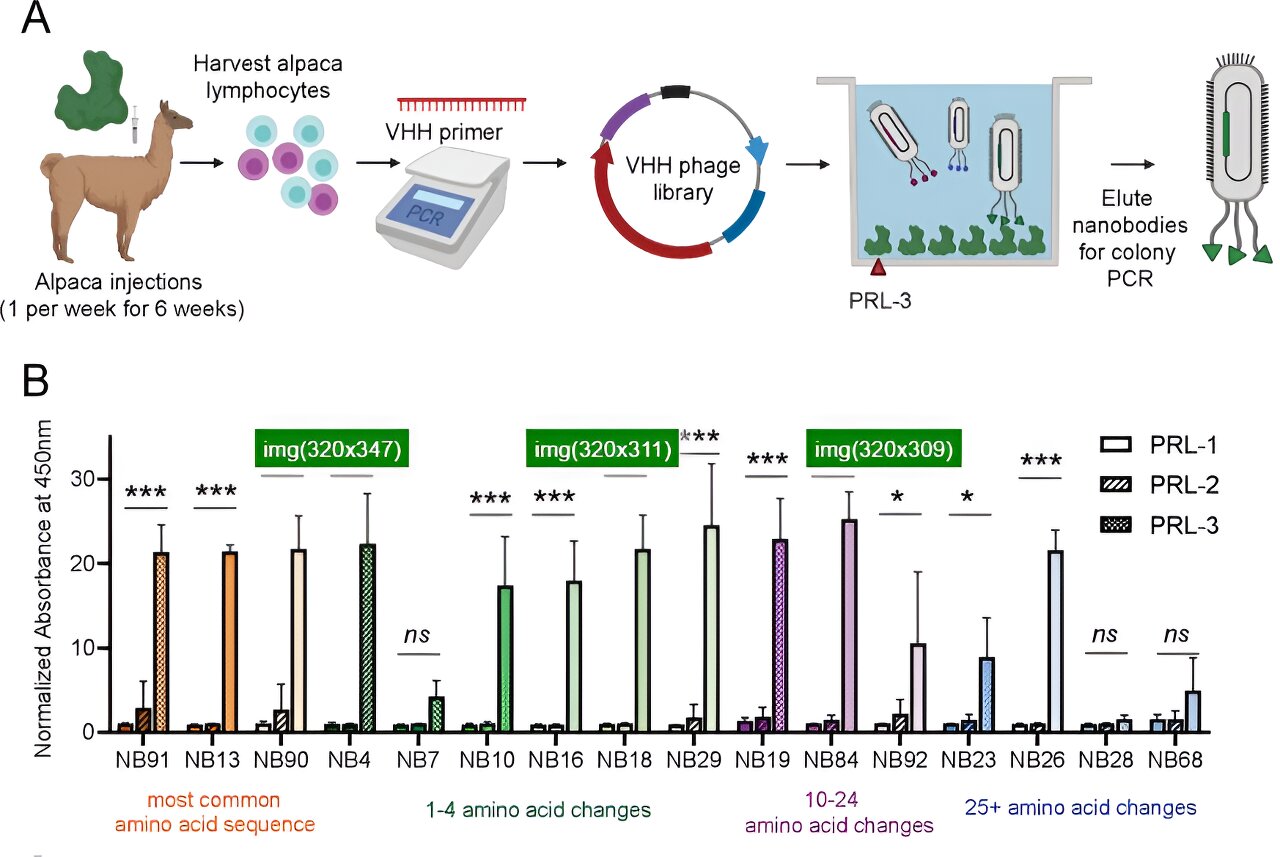

Day by day, our mind acknowledges and discriminates the various 1000’s of sensory alerts that it encounters. Right now’s finest synthetic intelligence fashions—a lot of that are impressed by neural circuits within the mind—have comparable talents. For instance, the so-called deep convolutional neural networks used for object recognition and classification are impressed by the layered construction of the visible cortex. Nonetheless, scientists have but to develop a full mathematical understanding of how organic or synthetic intelligence methods obtain this recognition skill. Now SueYeon Chung of the Flatiron Institute in New York and her colleagues have developed a extra detailed description of the connection of the geometric illustration of objects in organic and synthetic neural networks to the efficiency of the networks on classification duties [1] (Fig. 1). The researchers present that their idea can precisely estimate the efficiency capability of an arbitrarily complicated neural community, an issue that different strategies have struggled to resolve.

Neural networks present coarse-grained descriptions of the complicated circuits of organic neurons within the mind. They encompass extremely simplified neurons that sign each other through synapses—connections between pairs of neurons. The strengths of the synaptic connections change when a community is skilled to carry out a specific process.

Throughout every stage of a process, teams of neurons obtain enter from many different neurons within the community and fireplace when their exercise exceeds a given threshold. This firing produces an exercise sample, which will be represented as some extent in a high-dimensional state area during which every neuron corresponds to a distinct dimension. A group of exercise patterns equivalent to a particular enter type a “manifold” illustration in that state area. The geometric properties of manifold representations in neural networks rely upon the distribution of data within the community, and the evolution of the manifold representations throughout a process is formed through the algorithms coaching the community to carry out the precise process.

The geometries of a community’s manifolds additionally constrain the community’s capability to carry out duties, resembling invariant object recognition—the flexibility of a community to precisely acknowledge objects no matter variations of their look, together with measurement, place, or background (Fig. 2). In a earlier try to grasp these constraints, Chung and a distinct group of colleagues studied easy binary classification duties, ones the place the community should type stimuli into two teams primarily based on some classification rule [2]. In such duties, the capability of a community is outlined because the variety of objects that it may well appropriately classify if the objects are randomly assigned categorizing labels.

For networks the place every object corresponds to a single level in state area, a single-layered community with N neurons can classify 2N objects earlier than the classification error turns into equal to that of random guessing. The formalism developed by Chung and her colleagues allowed them to review the efficiency of complicated deep (multilayered) neural networks skilled for object classification. Establishing the manifold representations from the pictures used to coach such a community, they discovered that the imply radius and variety of dimensions of the manifolds estimated from the information sharply decreased in deeper layers of the community with respect to shallower layers. This lower was accompanied by an elevated classification capability of the system [2–4].

This earlier research and others, nevertheless, didn’t contemplate correlations between completely different object representations when calculating community capability. It’s well-known that object representations in organic and synthetic neural networks exhibit intricate correlations, which come up from structural options within the underlying information. These correlations can have necessary penalties for a lot of duties, together with classification, as a result of they’re mirrored in numerous ranges of similarity between so-called pairs of lessons in neural area. For instance, in a community tasked with classifying whether or not an animal was a mammal, the canine and wolf manifold representations will likely be extra comparable than these of an eagle and a falcon.

Now Chung’s group has generalized their performance-capacity computation of deep neural networks to incorporate correlations between object lessons [1]. The group derived a set of self-consistent equations that may be solved to present the community capability for a system with homogeneous correlations between the so-called axes (the scale alongside which the manifold varies) and centroids (the facilities of manifolds) of various manifolds. The researchers present that axis correlations between manifolds improve efficiency capability, whereas centroid correlations push the manifolds nearer to the origin of the neural state area, lowering efficiency capability.

Over the previous few years, the research of neural networks has seen many fascinating developments, and extra data-analysis instruments are more and more being developed to raised characterize the geometry of the representations obtained from neural information. The brand new outcomes make a considerable contribution on this space, as they can be utilized to review the properties of discovered representations in networks skilled to carry out a big number of duties during which correlations current within the enter information could play an important position in studying and efficiency. These duties embody these associated to motor coordination, pure language, and probing the relational construction of summary data.

References

- A. J. Wakhloo et al., “Linear classification of neural manifolds with correlated variability,” Phys. Rev. Lett. 131, 027301 (2023).

- S. Y. Chung et al., “Classification and geometry of common perceptual manifolds,” Phys. Rev. X 8, 031003 (2018).

- E. Gardner, “The area of interactions in neural community fashions,” J. Phys. A: Math. Gen. 21, 257 (1988).

- U. Cohen et al., “Separability and geometry of object manifolds in deep neural networks,” Nat. Commun. (2019).

Concerning the Creator

Topic Areas

[ad_2]