Model 13.3 of Wolfram Language and Mathematica—Stephen Wolfram Writings

[ad_1]

The Main Fringe of 2023 Expertise … and Past

Immediately we’re launching Model 13.3 of Wolfram Language and Mathematica—each accessible instantly on desktop and cloud. It’s solely been 196 days since we launched Model 13.2, however there’s loads that’s new, not least an entire subsystem round LLMs.

Final Friday (June 23) we celebrated 35 years since Model 1.0 of Mathematica (and what’s now Wolfram Language). And to me it’s unimaginable how far we’ve are available in these 35 years—but how constant we’ve been in our mission and objectives, and the way effectively we’ve been in a position to simply maintain constructing on the foundations we created all these years in the past.

And relating to what’s now Wolfram Language, there’s a beautiful timelessness to it. We’ve labored very laborious to make its design as clear and coherent as attainable—and to make it a timeless solution to elegantly characterize computation and every thing that may be described via it.

Final Friday I fired up Model 1 on an previous Mac SE/30 pc (with 2.5 megabytes of reminiscence), and it was a thrill see capabilities like Plot and NestList work simply as they’d at the moment—albeit loads slower. And it was great to have the ability to take (on a floppy disk) the pocket book I created with Model 1 and have it instantly come to life on a contemporary pc.

However at the same time as we’ve maintained compatibility over all these years, the scope of our system has grown out of all recognition—with every thing in Model 1 now occupying however a small sliver of the entire vary of performance of the trendy Wolfram Language:

A lot about Mathematica was forward of its time in 1988, and maybe even extra about Mathematica and the Wolfram Language is forward of its time at the moment, 35 years later. From the entire thought of symbolic programming, to the idea of notebooks, the common applicability of symbolic expressions, the notion of computational information, and ideas like on the spot APIs and a lot extra, we’ve been energetically persevering with to push the frontier over all these years.

Our long-term goal has been to construct a full-scale computational language that may characterize every thing computationally, in a method that’s efficient for each computer systems and people. And now—in 2023—there’s a brand new significance to this. As a result of with the appearance of LLMs our language has change into a novel bridge between people, AIs and computation.

The attributes that make Wolfram Language simple for people to write down, but wealthy in expressive energy, additionally make it perfect for LLMs to write down. And—in contrast to conventional programming languages— Wolfram Language is meant not just for people to write down, but additionally to learn and suppose in. So it turns into the medium via which people can affirm or appropriate what LLMs do, to ship computational language code that may be confidently assembled into a bigger system.

The Wolfram Language wasn’t initially designed with the latest success of LLMs in thoughts. However I believe it’s a tribute to the energy of its design that it now matches so effectively with LLMs—with a lot synergy. The Wolfram Language is vital to LLMs—in offering a solution to entry computation and computational information from throughout the LLM. However LLMs are additionally vital to Wolfram Language—in offering a wealthy linguistic interface to the language.

We’ve all the time constructed—and deployed—Wolfram Language so it may be accessible to as many individuals as attainable. However the creation of LLMs—and our new Chat Notebooks—opens up Wolfram Language to vastly extra folks. Wolfram|Alpha lets anybody use pure language—with out prior information—to get questions answered. Now with LLMs it’s attainable to make use of pure language to start out defining potential elaborate computations.

As quickly as you’ve formulated your ideas in computational phrases, you may instantly “clarify them to an LLM”, and have it produce exact Wolfram Language code. Usually once you have a look at that code you’ll understand you didn’t clarify your self fairly proper, and both the LLM or you may tighten up your code. However anybody—with none prior information—can now get began producing critical Wolfram Language code. And that’s essential in seeing Wolfram Language understand its potential to drive “computational X” for the widest attainable vary of

However whereas LLMs are “the most important single story” in Model 13.3, there’s loads else in Model 13.3 too—delivering the most recent from our long-term analysis and improvement pipeline. So, sure, in Model 13.3 there’s new performance not solely in LLMs but additionally in lots of “traditional” areas—in addition to in new areas having nothing to do with LLMs.

Throughout the 35 years since Model 1 we’ve been in a position to proceed accelerating our analysis and improvement course of, yr by yr constructing on the performance and automation we’ve created. And we’ve additionally regularly honed our precise technique of analysis and improvement—for the previous 5 years sharing our design conferences on open livestreams.

Model 13.3 is—from its title—an “incremental launch”. However—significantly with its new LLM performance—it continues our custom of delivering a protracted record of vital advances and updates, even in incremental releases.

LLM Tech Involves Wolfram Language

LLMs make attainable many vital new issues within the Wolfram Language. And since I’ve been discussing these in a collection of latest posts, I’ll simply give solely a reasonably brief abstract right here. Extra particulars are within the different posts, each ones which have appeared, and ones that may seem quickly.

Essentially the most instantly seen LLM tech in Model 13.3 is Chat Notebooks. Go to

You won’t like some particulars of what obtained performed (do you actually need these boldface labels?) however I take into account this gorgeous spectacular. And it’s an awesome instance of utilizing an LLM as a “linguistic interface” with frequent sense, that may generate exact computational language, which may then be run to get a outcome.

That is all very new know-how, so we don’t but know what patterns of utilization will work greatest. However I believe it’s going to go like this. First, you need to suppose computationally about no matter you’re attempting to do. Then you definately inform it to the LLM, and it’ll produce Wolfram Language code that represents what it thinks you wish to do. You may simply run that code (or the Chat Pocket book will do it for you), and see if it produces what you need. Otherwise you may learn the code, and see if it’s what you need. However both method, you’ll be utilizing computational language—Wolfram Language—because the medium to formalize and categorical what you’re attempting to do.

While you’re doing one thing you’re accustomed to, it’ll nearly all the time be sooner and higher to suppose immediately in Wolfram Language, and simply enter the computational language code you need. However if you happen to’re exploring one thing new, or simply getting began on one thing, the LLM is more likely to be a extremely invaluable solution to “get you to first code”, and to start out the method of crispening up what you need in computational phrases.

If the LLM doesn’t do precisely what you need, then you may inform it what it did fallacious, and it’ll attempt to appropriate it—although generally you may find yourself doing numerous explaining and having fairly a protracted dialog (and, sure, it’s usually vastly simpler simply to kind Wolfram Language code your self):

Generally the LLM will discover for itself that one thing went fallacious, and check out altering its code, and rerunning it:

And even when it didn’t write a chunk of code itself, it’s fairly good at piping as much as clarify what’s occurring when an error is generated:

And truly it’s obtained a giant benefit right here, as a result of “beneath the hood” it could possibly have a look at plenty of particulars (like stack hint, error documentation, and many others.) that people often don’t trouble with.

To help all this interplay with LLMs, there’s all types of recent construction within the Wolfram Language. In Chat Notebooks there are chat cells, and there are chatblocks (indicated by grey bars, and producing with ~) that delimit the vary of chat cells that shall be fed to the LLM once you press shiftenter on a brand new chat cell. And, by the best way, the entire mechanism of cells, cell teams, and many others. that we invented 36 years in the past now seems to be extraordinarily highly effective as a basis for Chat Notebooks.

One can consider the LLM as a type of “alternate evaluator” within the pocket book. And there are numerous methods to arrange and management it. Essentially the most speedy is within the menu related to each chat cell and each chatblock (and likewise accessible within the pocket book toolbar):

The primary objects right here allow you to outline the “persona” for the LLM. Is it going to behave as a Code Assistant that writes code and feedback on it? Or is it simply going to be a Code Author, that writes code with out being wordy about it? Then there are some “enjoyable” personas—like Wolfie and Birdnardo—that reply “with an perspective”. The Superior Settings allow you to do issues like set the underlying LLM mannequin you wish to use—and likewise what instruments (like Wolfram Language code analysis) you wish to hook up with it.

In the end personas are principally simply particular prompts for the LLM (collectively, generally with instruments, and many others.) And one of many new issues we’ve just lately launched to help LLMs is the Wolfram Immediate Repository:

The Immediate Repository accommodates a number of sorts of prompts. The primary are personas, that are used to “model” and in any other case inform chat interactions. However then there are two different kinds of prompts: operate prompts, and modifier prompts.

Operate prompts are for getting the LLM to do one thing particular, like summarize a chunk of textual content, or counsel a joke (it’s not terribly good at that). Modifier prompts are for figuring out how the LLM ought to modify its output, for instance translating into a special human language, or preserving it to a sure size.

You may pull in operate prompts from the repository right into a Chat Pocket book by utilizing !, and modifier prompts utilizing #. There’s additionally a ^ notation for saying that you really want the “enter” to the operate immediate to be the cell above:

That is how one can entry LLM performance from inside a Chat Pocket book. However there’s additionally an entire symbolic programmatic solution to entry LLMs that we’ve added to the Wolfram Language. Central to that is LLMFunction, which acts very very like a Wolfram Language pure operate, besides that it will get “evaluated” not by the Wolfram Language kernel, however by an LLM:

You may entry a operate immediate from the Immediate Repository utilizing LLMResourceFunction:

There’s additionally a symbolic illustration for chats. Right here’s an empty chat:

And right here now we “say one thing”, and the LLM responds:

There’s plenty of depth to each Chat Notebooks and LLM capabilities—as I’ve described elsewhere. There’s LLMExampleFunction for getting an LLM to comply with examples you give. There’s LLMTool for giving an LLM a solution to name capabilities within the Wolfram Language as “instruments”. And there’s LLMSynthesize which offers uncooked entry to the LLM as its textual content completion and different capabilities. (And controlling all of that is $LLMEvaluator which defines the default LLM configuration to make use of, as specified by an LLMConfiguration object.)

I take into account it slightly spectacular that we’ve been in a position to get to the extent of help for LLMs that we now have in Model 13.3 in lower than six months (together with constructing issues just like the Wolfram Plugin for ChatGPT, and the Wolfram ChatGPT Plugin Package). However there’s going to be extra to come back, with LLM performance more and more built-in into Wolfram Language and Notebooks, and, sure, Wolfram Language performance more and more built-in as a device into LLMs.

Line, Floor and Contour Integration

“Discover the integral of the operate ___” is a typical core factor one desires to do in calculus. And in Mathematica and the Wolfram Language that’s achieved with Combine. However significantly in purposes of calculus, it’s frequent to wish to ask barely extra elaborate questions, like “What’s the integral of ___ over the area ___?”, or “What’s the integral of ___ alongside the road ___?”

Nearly a decade in the past (in Model 10) we launched a solution to specify integration over areas—simply by giving the area “geometrically” because the area of the integral:

It had all the time been attainable to write down out such an integral in “customary Combine” kind

however the area specification is rather more handy—in addition to being rather more environment friendly to course of.

Discovering an integral alongside a line can also be one thing that may finally be performed in “customary Combine” kind. And if in case you have an specific (parametric) system for the road that is sometimes pretty easy. But when the road is laid out in a geometrical method then there’s actual work to do to even arrange the issue in “customary Combine” kind. So in Model 13.3 we’re introducing the operate LineIntegrate to automate this.

LineIntegrate can take care of integrating each scalar and vector capabilities over traces. Right here’s an instance the place the road is only a straight line:

However LineIntegrate additionally works for traces that aren’t straight, like this parametrically specified one:

To compute the integral additionally requires discovering the tangent vector at each level on the curve—however LineIntegrate routinely does that:

Line integrals are frequent in purposes of calculus to physics. However maybe much more frequent are floor integrals, representing for instance complete flux via a floor. And in Model 13.3 we’re introducing SurfaceIntegrate. Right here’s a reasonably easy integral of flux that goes radially outward via a sphere:

Right here’s a extra difficult case:

And right here’s what the precise vector discipline appears like on the floor of the dodecahedron:

LineIntegrate and SurfaceIntegrate take care of integrating scalar and vector capabilities in Euclidean area. However in Model 13.3 we’re additionally dealing with one other type of integration: contour integration within the advanced airplane.

We will begin with a traditional contour integral—illustrating Cauchy’s theorem:

Right here’s a barely extra elaborate advanced operate

and right here’s its integral round a round contour:

For sure, this nonetheless offers the identical outcome, because the new contour nonetheless encloses the identical poles:

Extra impressively, right here’s the outcome for an arbitrary radius of contour:

And right here’s a plot of the (imaginary a part of the) outcome:

Contours might be of any form:

The outcome for the contour integral relies on whether or not the pole is contained in the “Pac-Man”:

One other Milestone for Particular Features

One can consider particular capabilities as a method of “modularizing” mathematical outcomes. It’s usually a problem to know that one thing might be expressed when it comes to particular capabilities. However as soon as one’s performed this, one can instantly apply the unbiased information that exists in regards to the particular capabilities.

Even in Model 1.0 we already supported many particular capabilities. And through the years we’ve added help for a lot of extra—to the purpose the place we now cowl every thing that may moderately be thought of a “classical” particular operate. However in recent times we’ve additionally been tackling extra basic particular capabilities. They’re mathematically extra advanced, however every one we efficiently cowl makes a brand new assortment of issues accessible to actual resolution and dependable numerical and symbolic computation.

Many of the “traditional” particular capabilities—like Bessel capabilities, Legendre capabilities, elliptic integrals, and many others.—are in the long run univariate hypergeometric capabilities. However one vital frontier in “basic particular capabilities” are these akin to bivariate hypergeometric capabilities. And already in Model 4.0 (1999) we launched one instance of equivalent to a operate: AppellF1. And, sure, it’s taken some time, however now in Model 13.3 we’ve lastly completed doing the maths and creating the algorithms to introduce AppellF2, AppellF3 and AppellF4.

On the face of it, it’s simply one other operate—with plenty of arguments—whose worth we will discover to any precision:

Often it has a closed kind:

However regardless of its mathematical sophistication, plots of it are inclined to look pretty uninspiring:

Sequence expansions start to indicate somewhat extra:

And finally it is a operate that solves a pair of PDEs that may be seen as a generalization to 2 variables of the univariate hypergeometric ODE. So what different generalizations are attainable? Paul Appell spent a few years across the flip of the 20 th century trying—and got here up with simply 4, which as of Model 13.3 now all seem within the Wolfram Language, as AppellF1, AppellF2, AppellF3 and AppellF4.

To make particular capabilities helpful within the Wolfram Language they should be “knitted” into different capabilities of the language—from numerical analysis to collection growth, calculus, equation fixing, and integral transforms. And in Model 13.3 we’ve handed one other particular operate milestone, round integral transforms.

After I began utilizing particular capabilities within the Seventies the primary supply of details about them tended to be a small variety of handbooks that had been assembled via a long time of labor. After we started to construct Mathematica and what’s now the Wolfram Language, considered one of our objectives was to subsume the knowledge in such handbooks. And through the years that’s precisely what we’ve achieved—for integrals, sums, differential equations, and many others. However one of many holdouts has been integral transforms for particular capabilities. And, sure, we’ve coated an awesome many of those. However there are unique examples that may usually solely “coincidentally” be performed in closed kind—and that previously have solely been present in books of tables.

However now in Model 13.3 we will do instances like:

And actually we imagine that in Model 13.3 we’ve reached the sting of what’s ever been found out about Laplace transforms for particular capabilities. Essentially the most intensive handbook—lastly revealed in 1973—runs to about 400 pages. Just a few years in the past we may do about 55% of the ahead Laplace transforms within the guide, and 31% of the inverse ones. However now in Model 13.3 we will do 100% of those that we will confirm as appropriate (and, sure, there are positively some errors within the guide). It’s the top of a protracted journey, and a satisfying achievement within the quest to make as a lot mathematical information as attainable routinely computable.

Finite Fields!

Ever since Model 1.0 we’ve been in a position to do issues like factoring polynomials modulo primes. And plenty of packages have been developed that deal with particular points of finite fields. However in Model 13.3 we now have full, constant protection of all finite fields—and operations with them.

Right here’s our symbolic illustration of the sector of integers modulo 5 (AKA ℤ5 or GF(5)):

And listed below are symbolic representations of the weather of this discipline—which on this explicit case might be slightly trivially recognized with bizarre integers mod 5:

Arithmetic instantly works on these symbolic components:

However the place issues get a bit trickier is once we’re coping with prime-power fields. We characterize the sector GF(23) symbolically as:

However now the weather of this discipline now not have a direct correspondence with bizarre integers. We will nonetheless assign “indices” to them, although (with components 0 and 1 being the additive and multiplicative identities). So right here’s an instance of an operation on this discipline:

However what really is that this outcome? Effectively, it’s a component of the finite discipline—with index 4—represented internally within the kind:

The little field opens out to indicate the symbolic FiniteField assemble:

And we will extract properties of the ingredient, like its index:

So right here, for instance, are the entire addition and multiplication tables for this discipline:

For the sector GF(72) these look somewhat extra difficult:

There are numerous number-theoretic-like capabilities that one can compute for components of finite fields. Right here’s a component of GF(510):

The multiplicative order of this (i.e. energy of it that offers 1) is sort of giant:

Right here’s its minimal polynomial:

However the place finite fields actually start to come back into their very own is when one appears at polynomials over them. Right here, for instance, is factoring over GF(32):

Increasing this offers a finite-field-style illustration of the unique polynomial:

Right here’s the results of increasing an influence of a polynomial over GF(32):

Extra, Stronger Computational Geometry

We initially launched computational geometry in a critical method into the Wolfram Language a decade in the past. And ever since then we’ve been constructing increasingly capabilities in computational geometry.

We’ve had RegionDistance for computing the gap from some extent to a area for a decade. In Model 13.3 we’ve now prolonged RegionDistance so it could possibly additionally compute the shortest distance between two areas:

We’ve additionally launched RegionFarthestDistance which computes the furthest distance between any two factors in two given areas:

One other new operate in Model 13.3 is RegionHausdorffDistance which computes the biggest of all shortest distances between factors in two areas; on this case it offers a closed kind:

One other pair of recent capabilities in Model 13.3 are InscribedBall and CircumscribedBall—which give (n-dimensional) spheres that, respectively, simply match inside and out of doors areas you give:

Prior to now a number of variations, we’ve added performance that mixes geo computation with computational geometry. Model 13.3 has the start of one other initiative—introducing summary spherical geometry:

This works for spheres in any variety of dimensions:

Along with including performance, Model 13.3 additionally brings important velocity enhancements (usually 10x or extra) to some core operations in 2D computational geometry—making issues like computing this quick although it includes difficult areas:

Visualizations Start to Come Alive

An incredible long-term energy of the Wolfram Language has been its capability to provide insightful visualizations in a extremely automated method. In Model 13.3 we’re taking this additional, by including automated “dwell highlighting”. Right here’s a easy instance, simply utilizing the operate Plot. As an alternative of simply producing static curves, Plot now routinely generates a visualization with interactive highlighting:

The identical factor works for ListPlot:

The highlighting can, for instance, present dates too:

There are various selections for the way the highlighting needs to be performed. The only factor is simply to specify a method through which to focus on entire curves:

However there are various different built-in highlighting specs. Right here, for instance, is "XSlice":

Ultimately, although, highlighting is constructed up from an entire assortment of elements—like "NearestPoint", "Crosshairs", "XDropline", and many others.—you can assemble and elegance for your self:

The choice PlotHighlighting defines world highlighting in a plot. However by utilizing the Highlighted “wrapper” you may specify that solely a selected ingredient within the plot needs to be highlighted:

For interactive and exploratory functions, the type of automated highlighting we’ve simply been exhibiting could be very handy. However if you happen to’re making a static presentation, you’ll have to “burn in” explicit items of highlighting—which you are able to do with Positioned:

In indicating components in a graphic there are completely different results one can use. In Model 13.1 we launched DropShadowing[]. In Model 13.3 we’re introducing Haloing:

Haloing will also be mixed with interactive highlighting:

By the best way, there are many good results you will get with Haloing in graphics. Right here’s a geo instance—together with some parameters for the “orientation” and “thickness” of the haloing:

Publishing to Augmented + Digital Actuality

All through the historical past of the Wolfram Language 3D visualization has been an vital functionality. And we’re all the time searching for methods to share and talk 3D geometry. Already again within the early Nineties we had experimental implementations of VR. However on the time there wasn’t something just like the type of infrastructure for VR that might be wanted to make this broadly helpful. Within the mid-2010s we then launched VR performance primarily based on Unity—that gives highly effective capabilities throughout the Unity ecosystem, however is just not accessible outdoors.

Immediately, nonetheless, it appears there are lastly broad requirements rising for AR and VR. And so in Model 13.3 we’re in a position to start delivering what we hope will present broadly accessible AR and VR deployment from the Wolfram Language.

At a underlying stage what we’re doing is to help the USD and GLTF geometry illustration codecs. However we’re additionally constructing a higher-level interface that permits anybody to “publish” 3D geometry for AR and VR.

Given a chunk of geometry (which for now can’t contain too many polygons), all you do is apply ARPublish:

The result’s a cloud object that has a sure underlying UUID, however is displayed in a pocket book as a QR code. Now all you do is have a look at this QR code along with your cellphone (or pill, and many others.) digital camera, and press the URL it extracts.

The outcome shall be that the geometry you revealed with ARPublish now seems in AR in your cellphone:

Transfer your cellphone and also you’ll see that your geometry has been realistically positioned into the scene. You can too go to a VR “object” mode in which you’ll be able to manipulate the geometry in your cellphone.

“Below the hood” there are some barely elaborate issues occurring—significantly in offering the suitable knowledge to completely different sorts of telephones. However the result’s a primary step within the technique of simply with the ability to get AR and VR output from the Wolfram Language—deployed in no matter gadgets help AR and VR.

Getting the Particulars Proper: The Persevering with Story

In each model of Wolfram Language we add all kinds of basically new capabilities. However we additionally work to fill in particulars of present capabilities, regularly pushing to make them as basic, constant and correct as attainable. In Model 13.3 there are various particulars which have been “made proper”, in many alternative areas.

Right here’s one instance: the comparability (and sorting) of Round objects. Listed here are 10 random “numbers with uncertainty”:

These type by their central worth:

But when we have a look at these, a lot of their uncertainty areas overlap:

So when ought to we take into account a selected number-with-uncertainty “larger than” one other? In Model 13.3 we fastidiously keep in mind uncertainty when making comparisons. So, for instance, this offers True:

However when there’s too huge an uncertainty within the values, we now not take into account the ordering “sure sufficient”:

Right here’s one other instance of consistency: the applicability of Period. We launched Period to use to specific time constructs, issues like Audio objects, and many others. However in Model 13.3 it additionally applies to entities for which there’s an affordable solution to outline a “period”:

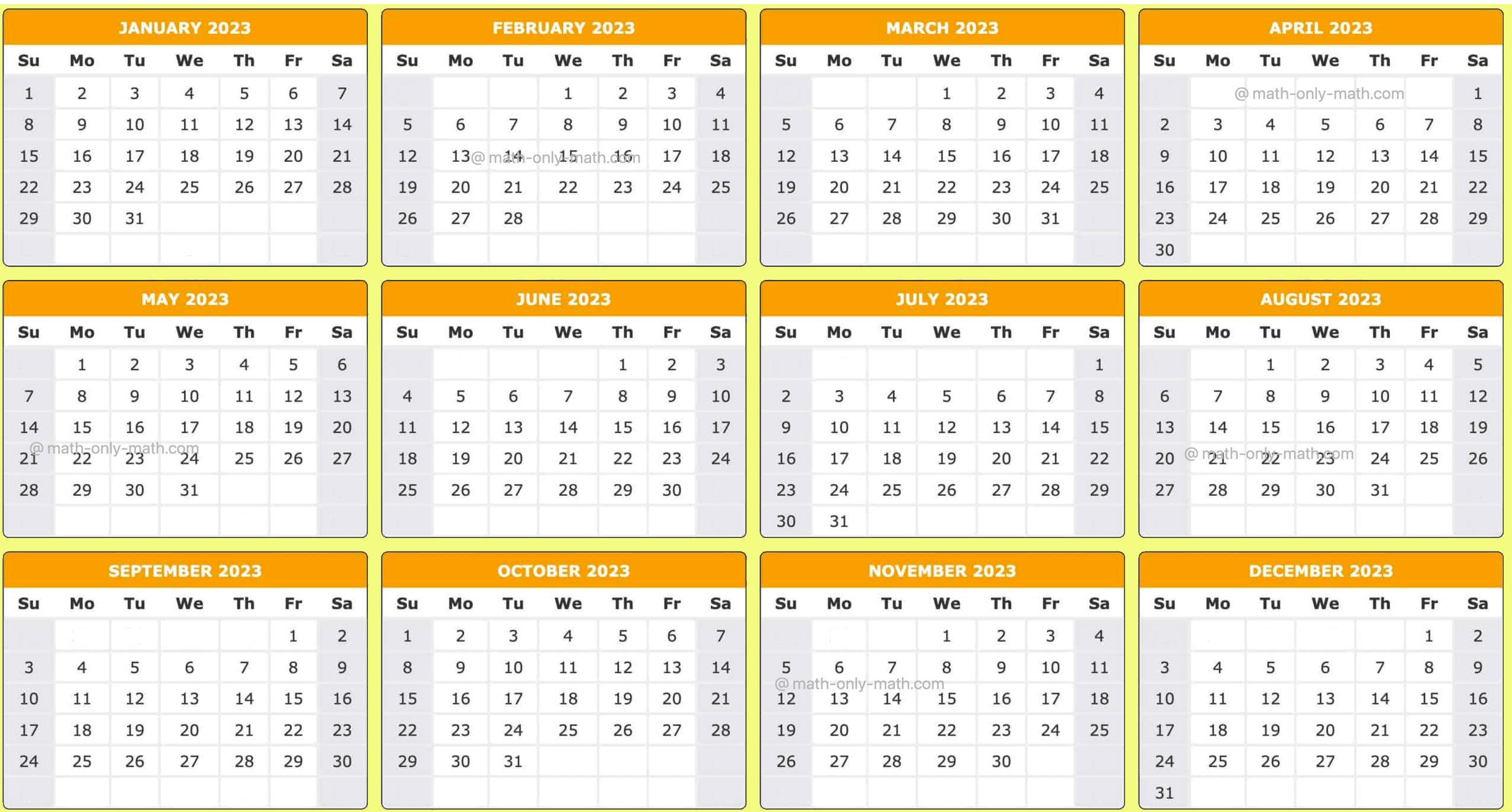

Dates (and instances) are difficult issues—and we’ve put numerous effort into dealing with them appropriately and constantly within the Wolfram Language. One idea that we launched a couple of years in the past is date granularity: the (refined) analog of numerical precision for dates. However at first just some date capabilities supported granularity; now in Model 13.3 all date capabilities embrace a DateGranularity possibility—in order that granularity can constantly be tracked via all date-related operations:

Additionally in dates, one thing that’s been added, significantly for astronomy, is the flexibility to take care of “years” specified by actual numbers:

And one consequence of that is that it turns into simpler to make a plot of one thing like astronomical distance as a operate of time:

Additionally in astronomy, we’ve been steadily extending our capabilities to constantly fill in computations for extra conditions. In Model 13.3, for instance, we will now compute dawn, and many others. not simply from factors on Earth, however from factors anyplace within the photo voltaic system:

By the best way, we’ve additionally made the computation of dawn extra exact. So now if you happen to ask for the place of the Solar proper at dawn you’ll get a outcome like this:

How come the altitude of the Solar is just not zero at dawn? That’s as a result of the disk of the Solar is of nonzero dimension, and “dawn” is outlined to be when any a part of the Solar pokes over the horizon.

Even Simpler to Kind: Affordances for Wolfram Language Enter

Again in 1988 when what’s now Wolfram Language first existed, the one solution to kind it was like bizarre textual content. However steadily we’ve launched increasingly “affordances” to make it simpler and sooner to kind appropriate Wolfram Language enter. In 1996, with Model 3, we launched automated spacing (and spanning) for operators, in addition to brackets that flashed after they matched—and issues like -> being routinely changed by ![]() . Then in 2007, with Model 6, we launched—with some trepidation at first—syntax coloring. We’d had a solution to request autocompletion of a logo title all the best way again to the start, however it’d by no means been good or environment friendly sufficient for us to make it occur on a regular basis as you kind. However in 2012, for Model 9, we created a way more elaborate autocomplete system—that was helpful and environment friendly sufficient that we turned it on for all pocket book enter. A key function of this autocomplete system was its context-sensitive information of the Wolfram Language, and the way and the place completely different symbols and strings sometimes seem. Over the previous decade, we’ve steadily refined this method to the purpose the place I, for one, deeply depend on it.

. Then in 2007, with Model 6, we launched—with some trepidation at first—syntax coloring. We’d had a solution to request autocompletion of a logo title all the best way again to the start, however it’d by no means been good or environment friendly sufficient for us to make it occur on a regular basis as you kind. However in 2012, for Model 9, we created a way more elaborate autocomplete system—that was helpful and environment friendly sufficient that we turned it on for all pocket book enter. A key function of this autocomplete system was its context-sensitive information of the Wolfram Language, and the way and the place completely different symbols and strings sometimes seem. Over the previous decade, we’ve steadily refined this method to the purpose the place I, for one, deeply depend on it.

In latest variations, we’ve made different “typability” enhancements. For instance, in Model 12.3, we generalized the -> to ![]() transformation to an entire assortment of “auto operator renderings”. Then in Model 13.0 we launched “automatching” of brackets, through which, for instance, if you happen to enter [ at the end of what you’re typing, you’ll automatically get a matching ].

transformation to an entire assortment of “auto operator renderings”. Then in Model 13.0 we launched “automatching” of brackets, through which, for instance, if you happen to enter [ at the end of what you’re typing, you’ll automatically get a matching ].

Making “typing affordances” work easily is a painstaking and difficult enterprise. However in each latest model we’ve steadily been including extra options that—in very “pure” methods—make it simpler and sooner to kind Wolfram Language enter.

In Model 13.3 one main change is an enhancement to autocompletion. As an alternative of simply exhibiting pure completions through which characters are appended to what’s already been typed, the autocompletion menu now contains “fuzzy completions” that fill in intermediate characters, change capitalization, and many others.

So, for instance, if you happen to kind “lp” you now get ListPlot as a completion (the little underlines point out the place the letters you really kind seem):

From a design standpoint one factor that’s vital about that is that it additional removes the “brief title” premium—and weights issues even additional on the facet of wanting names that designate themselves after they’re learn, slightly than which are simple to kind in an unassisted method. With the Wolfram Operate Repository it’s change into more and more frequent to wish to kind ResourceFunction. And we’d been pondering that maybe we should always have a particular, brief notation for that. However with the brand new autocompletion, one can operationally simply press three

When one designs one thing and will get the design proper, folks often don’t discover; issues simply “work as they anticipate”. However when there’s a design error, that’s when folks discover—and are pissed off by—the design. However then there’s one other case: a state of affairs the place, for instance, there are two issues that might occur, and generally one desires one, and generally the opposite. In doing the design, one has to choose a selected department. And when this occurs to be the department folks need, they don’t discover, they usually’re completely happy. But when they need the opposite department, it may be complicated and irritating.

Within the design of the Wolfram Language one of many issues that needs to be chosen is the priority for each operator: a + b × c means a + (b × c) as a result of × has larger priority than +. Usually the right order of precedences is pretty apparent. However generally it’s merely inconceivable to make everybody completely happy on a regular basis. And so it’s with ![]() and &. It’s very handy to have the ability to add & on the finish of one thing you kind, and make it right into a pure operate. However meaning if you happen to kind

and &. It’s very handy to have the ability to add & on the finish of one thing you kind, and make it right into a pure operate. However meaning if you happen to kind ![]() b &

b &![]() b &. When capabilities have choices, nonetheless, one usually desires issues like title

b &. When capabilities have choices, nonetheless, one usually desires issues like title ![]() operate. The pure tendency is to kind this as title

operate. The pure tendency is to kind this as title ![]() physique &. However this may imply (title

physique &. However this may imply (title ![]() physique) & slightly than title

physique) & slightly than title ![]() (physique &). And, sure, once you attempt to run the operate, it’ll discover it doesn’t have appropriate arguments and choices specified. However you’d wish to know that what you’re typing isn’t proper as quickly as you kind it. And now in Model 13.3 we now have a mechanism for that. As quickly as you enter & to “finish a operate”, you’ll see the extent of the operate flash:

(physique &). And, sure, once you attempt to run the operate, it’ll discover it doesn’t have appropriate arguments and choices specified. However you’d wish to know that what you’re typing isn’t proper as quickly as you kind it. And now in Model 13.3 we now have a mechanism for that. As quickly as you enter & to “finish a operate”, you’ll see the extent of the operate flash:

And, yup, you may see that’s fallacious. Which supplies you the possibility to repair it as:

There’s one other pocket book-related replace in Model 13.3 that isn’t immediately associated to typing, however will assist in the development of easy-to-navigate consumer interfaces. We’ve had ActionMenu since 2007—however it’s solely been in a position to create one-level menus. In Model 13.3 it’s been prolonged to arbitrary hierarchical menus:

Once more in a roundabout way associated to typing, however now related to managing and enhancing code, there’s an replace in Model 13.3 to bundle enhancing within the pocket book interface. Deliver up a .wl file and it’ll seem as a pocket book. However its default toolbar is completely different from the same old pocket book toolbar (and is newly designed in Model 13.3):

Go To now offers you a solution to instantly go to the definition of any operate whose title matches what you kind, in addition to any part, and many others.:

The numbers on the fitting listed below are code line numbers; you may also go on to a selected line quantity by typing :nnn.

The Elegant Code Challenge

One of many central objectives—and achievements—of the Wolfram Language is to create a computational language that can be utilized not solely as a solution to inform computer systems what to do, but additionally as a solution to talk computational concepts for human consumption. In different phrases, Wolfram Language is meant not solely to be written by people (for consumption by computer systems), but additionally to be learn by people.

Essential to that is the broad consistency of the Wolfram Language, in addition to its use of fastidiously chosen natural-language-based names for capabilities, and many others. However what can we do to make Wolfram Language as simple and nice as attainable to learn? Prior to now we’ve balanced our optimization of the looks of Wolfram Language between studying and writing. However in Model 13.3 we’ve obtained the beginnings of our Elegant Code mission—to seek out methods to render Wolfram Language to be particularly optimized for studying.

For instance, right here’s a small piece of code (from my An Elementary Introduction to the Wolfram Language), proven within the default method it’s rendered in notebooks:

However in Model 13.3 you should utilize Format > Display Atmosphere > Elegant to set a pocket book to make use of the present model of “elegant code”:

(And, sure, that is what we’re really utilizing for code on this submit, in addition to another latest ones.) So what’s the distinction? To start with, we’re utilizing a proportionally spaced font that makes the names (right here of symbols) simple to “learn like phrases”. And second, we’re including area between these “phrases”, and graying again “structural components” like ![]() …

… ![]() and

and ![]() …

… ![]() . While you write a chunk of code, issues like these structural components want to face out sufficient so that you can “see they’re proper”. However once you’re studying code, you don’t have to pay as a lot consideration to them. As a result of the Wolfram Language is so primarily based on “word-like” names, you may sometimes “perceive what it’s saying” simply by “studying these phrases”.

. While you write a chunk of code, issues like these structural components want to face out sufficient so that you can “see they’re proper”. However once you’re studying code, you don’t have to pay as a lot consideration to them. As a result of the Wolfram Language is so primarily based on “word-like” names, you may sometimes “perceive what it’s saying” simply by “studying these phrases”.

After all, making code “elegant” is not only a query of formatting; it’s additionally a query of what’s really within the code. And, sure, as with writing textual content, it takes effort to craft code that “expresses itself elegantly”. However the excellent news is that the Wolfram Language—via its uniquely broad and high-level character—makes it surprisingly easy to create code that expresses itself extraordinarily elegantly.

However the level now could be to make that code not solely elegant in content material, but additionally elegant in formatting. In technical paperwork it’s frequent to see math that’s no less than formatted elegantly. However when one sees code, most of the time, it appears like one thing solely a machine may recognize. After all, if the code is in a standard programming language, it’ll often be lengthy and probably not supposed for human consumption. However what if it’s elegantly crafted Wolfram Language code? Effectively then we’d prefer it to look as engaging as textual content and math. And that’s the purpose of our Elegant Code mission.

There are various tradeoffs, and lots of points to be navigated. However in Model 13.3 we’re positively making progress. Right here’s an instance that doesn’t have so many “phrases”, however the place the elegant code formatting nonetheless makes the “blocking” of the code extra apparent:

Right here’s a barely longer piece of code, the place once more the elegant code formatting helps pull out “readable” phrases, in addition to making the general construction of the code extra apparent:

Notably in recent times, we’ve added many mechanisms to let one write Wolfram Language that’s simpler to learn. There are the auto operator renderings, like m[[i]] turning into ![]() . After which there are issues just like the

. After which there are issues just like the ![]() notation for pure capabilities. One significantly vital ingredient is Iconize, which helps you to present any piece of Wolfram Language enter in a visually “iconized” kind—which nonetheless evaluates similar to the corresponding underlying expression:

notation for pure capabilities. One significantly vital ingredient is Iconize, which helps you to present any piece of Wolfram Language enter in a visually “iconized” kind—which nonetheless evaluates similar to the corresponding underlying expression:

Iconize allows you to successfully disguise particulars (like giant quantities of knowledge, possibility settings, and many others.) However generally you wish to spotlight issues. You are able to do it with Type, Framed, Highlighted—and in Model 13.3, Squiggled:

By default, all these constructs persist via analysis. However in Model 13.3 all of them now have the choice StripOnInput, and with this set, you will have one thing that reveals up highlighted in an enter cell, however the place the highlighting is stripped when the expression is definitely fed to the Wolfram Language kernel.

These present their highlighting within the pocket book:

However when utilized in enter, the highlighting is stripped:

See Extra Additionally…

An incredible energy of the Wolfram Language (sure, maybe initiated by my authentic 1988 Mathematica Guide) is its detailed documentation—which has now proved invaluable not just for human customers but additionally for AIs. Plotting the variety of phrases that seem within the documentation in successive variations, we see a powerful progressive improve:

However with all that documentation, and all these new issues to be documented, the issue of appropriately crosslinking every thing has elevated. Even again in Model 1.0, when the documentation was a bodily guide, there have been “See Additionally’s” between capabilities:

And by now there’s a sophisticated community of such See Additionally’s:

However that’s simply the community of how capabilities level to capabilities. What about other forms of constructs? Like codecs, characters or entity sorts—or, for that matter, entries within the Wolfram Operate Repository, Wolfram Information Repository, and many others. Effectively, in Model 13.3 we’ve performed a primary iteration of crosslinking all these sorts of issues.

So right here now are the “See Additionally” areas for Graph and Molecule:

Not solely are there capabilities right here; there are additionally other forms of issues that an individual (or AI) these pages may discover related.

It’s nice to have the ability to comply with hyperlinks, however generally it’s higher simply to have materials instantly accessible, with out following a hyperlink. Again in Model 1.0 we made the choice that when a operate inherits a few of its choices from a “base operate” (say Plot from Graphics), we solely have to explicitly record the non-inherited possibility values. On the time, this was a great way to save lots of somewhat paper within the printed guide. However now the optimization is completely different, and at last in Model 13.3 we now have a solution to present “All Choices”—tucked away so it doesn’t distract from the typically-more-important non-inherited choices.

Right here’s the setup for Plot. First, the record of non-inherited possibility values:

Then, on the finish of the Particulars part

which opens to:

Footage from Phrases: Generative AI for Photos

One of many outstanding issues that’s emerged as a risk from latest advances in AI and neural nets is the era of photos from textual descriptions. It’s not but life like to do that in any respect effectively on something however a high-end (and sometimes server) GPU-enabled machine. However in Model 13.3 there’s now a built-in operate ImageSynthesize that may get photos synthesized, for now via an exterior API.

You give textual content, and ImageSynthesize will attempt to generate photos for which that textual content is an outline:

Generally these photos shall be immediately helpful in their very own proper, maybe as “theming photos” for paperwork or consumer interfaces. Generally they’ll present uncooked materials that may be developed into icons or different artwork. And generally they’re most helpful as inputs to exams or different algorithms.

And one of many vital issues about ImageSynthesize is that it could possibly instantly be used as a part of any Wolfram Language workflow. Decide a random sentence from Alice in Wonderland:

Now ImageSynthesize can “illustrate” it:

Or we will get AI to feed AI:

ImageSynthesize is about as much as routinely be capable of synthesize photos of various sizes:

You may take the output of ImageSynthesize and instantly course of it:

ImageSynthesize cannot solely produce full photos, however can even fill in clear elements of “incomplete” photos:

Along with ImageSynthesize and all its new LLM performance, Model 13.3 additionally contains quite a few advances within the core machine studying system for Wolfram Language. In all probability essentially the most notable are speedups of as much as 10x and past for neural internet coaching and analysis on x86-compatible techniques, in addition to higher fashions for ImageIdentify. There are additionally a wide range of new networks within the Wolfram Neural Web Repository, significantly ones primarily based on transformers.

Digital Twins: Becoming System Fashions to Information

It’s been 5 years since we first started to introduce industrial-scale techniques engineering capabilities within the Wolfram Language. The aim is to have the ability to compute with fashions of engineering and different techniques that may be described by (probably very giant) collections of bizarre differential equations and their discrete analogs. Our separate Wolfram System Modeler product offers an IDE and GUI for graphically creating such fashions.

For the previous 5 years we’ve been in a position to do high-efficiency simulation of those fashions from throughout the Wolfram Language. And over the previous few years we’ve been including all kinds of higher-level performance for programmatically creating fashions, and for systematically analyzing their habits. A serious focus in latest variations has been the synthesis of management techniques, and numerous types of controllers.

Model 13.3 now tackles a special situation, which is the alignment of fashions with real-world techniques. The concept is to have a mannequin which accommodates sure parameters, after which to find out these parameters by basically becoming the mannequin’s habits to noticed habits of a real-world system.

Let’s begin by speaking a couple of easy case the place our mannequin is simply outlined by a single ODE:

This ODE is straightforward sufficient that we will discover its analytical resolution:

So now let’s make some “simulated real-world knowledge” assuming

Right here’s what the information appears like:

Now let’s attempt to “calibrate” our authentic mannequin utilizing this knowledge. It’s a course of much like machine studying coaching. On this case we make an “preliminary guess” that the parameter a is 1; then when SystemModelCalibrate runs it reveals the “loss” lowering as the right worth of a is discovered:

The “calibrated” mannequin does certainly have a ≈ 2:

Now we will evaluate the calibrated mannequin with the information:

As a barely extra life like engineering-style instance let’s have a look at a mannequin of an electrical motor (with each electrical and mechanical elements):

Let’s say we’ve obtained some knowledge on the habits of the motor; right here we’ve assumed that we’ve measured the angular velocity of a part within the motor as a operate of time. Now we will use this knowledge to calibrate parameters of the mannequin (right here the resistance of a resistor and the damping fixed of a damper):

Listed here are the fitted parameter values:

And right here’s a full plot of the angular velocity knowledge, along with the fitted mannequin and its 95% confidence bands:

SystemModelCalibrate can be utilized not solely in becoming a mannequin to real-world knowledge, but additionally for instance in becoming less complicated fashions to extra difficult ones, making attainable numerous types of “mannequin simplification”.

Symbolic Testing Framework

The Wolfram Language is by many measures one of many world’s most advanced items of software program engineering. And over the a long time we’ve developed a big and highly effective system for testing and validating it. A decade in the past—in Model 10—we started to make a few of our inner instruments accessible for anybody writing Wolfram Language code. Now in Model 13.3 we’re introducing a extra streamlined—and “symbolic”—model of our testing framework.

The fundamental thought is that every take a look at is represented by a symbolic TestObject, created utilizing TestCreate:

By itself, TestObject is an inert object. You may run the take a look at it represents utilizing TestEvaluate:

Every take a look at object has an entire assortment of properties, a few of which solely get crammed in when the take a look at is run:

It’s very handy to have symbolic take a look at objects that one can manipulate utilizing customary Wolfram Language capabilities, say choosing exams with explicit options, or producing new exams from previous. And when one builds a take a look at suite, one does it simply by making an inventory of take a look at objects.

This makes an inventory of take a look at objects (and, sure, there’s some trickiness as a result of TestCreate must maintain unevaluated the expression that’s going to be examined):

However given these exams, we will now generate a report from operating them:

TestReport has numerous choices that help you monitor and management the operating of a take a look at suite. For instance, right here we’re saying to echo each "TestEvaluated" occasion that happens:

Did You Get That Math Proper?

Most of what the Wolfram Language is about is taking inputs from people (in addition to applications, and now AIs) and computing outputs from them. However a couple of years in the past we began introducing capabilities for having the Wolfram Language ask questions of people, after which assessing their solutions.

In latest variations we’ve been build up subtle methods to assemble and deploy “quizzes” and different collections of questions. However one of many core points is all the time easy methods to decide whether or not an individual has answered a selected query appropriately. Generally that’s simple to find out. If we ask “What’s 2 + 2?”, the reply higher be “4” (or conceivably “4”). However what if we ask a query the place the reply is a few algebraic expression? The problem is that there could also be many mathematically equal types of that expression. And it relies on what precisely one’s asking whether or not one considers a selected kind to be the “proper reply” or not.

For instance, right here we’re computing a by-product:

And right here we’re doing a factoring drawback:

These two solutions are mathematically equal. They usually’d each be “cheap solutions” for the by-product if it appeared as a query in a calculus course. However in an algebra course, one wouldn’t wish to take into account the unfactored kind a “appropriate reply” to the factoring drawback, although it’s “mathematically equal”.

And to take care of these sorts of points, we’re introducing in Model 13.3 extra detailed mathematical evaluation capabilities. With a "CalculusResult" evaluation operate, it’s OK to present the unfactored kind:

However with a "PolynomialResult" evaluation operate, the algebraic type of the expression needs to be the identical for it to be thought of “appropriate”:

There’s additionally one other kind of evaluation operate—"ArithmeticResult"—which solely permits trivial arithmetic rearrangements, in order that it considers 2 + 3 equal to three + 2, however doesn’t take into account 2/3 equal to 4/6:

Right here’s the way you’d construct a query with this:

And now if you happen to kind “2/3” it’ll say you’ve obtained it proper, however if you happen to kind “4/6” it gained’t. Nevertheless, if you happen to use, say, "CalculusResult" within the evaluation operate, it’ll say you bought it proper even if you happen to kind “4/6”.

Streamlining Parallel Computation

Ever because the mid-Nineties there’s been the aptitude to do parallel computation within the Wolfram Language. And definitely for me it’s been vital in an entire vary of analysis initiatives I’ve performed. I at the moment have 156 cores routinely accessible in my “dwelling” setup, distributed throughout 6 machines. It’s generally difficult from a system administration standpoint to maintain all these machines and their networking operating as one desires. And one of many issues we’ve been doing in latest variations—and now accomplished in Model 13.3—is to make it simpler from throughout the Wolfram Language to see and handle what’s occurring.

All of it comes all the way down to specifying the configuration of kernels. And in Model 13.3 that’s now performed utilizing symbolic KernelConfiguration objects. Right here’s an instance of 1:

There’s all kinds of knowledge within the kernel configuration object:

It describes “the place” a kernel with that configuration shall be, easy methods to get to it, and the way it needs to be launched. The kernel may simply be native to your machine. Or it could be on a distant machine, accessible via ssh, or https, or our personal wstp (Wolfram Symbolic Transport Protocol) or lwg (Light-weight Grid) protocols.

In Model 13.3 there’s now a GUI for establishing kernel configurations:

The Kernel Configuration Editor allows you to enter all the main points which are wanted, about community connections, authentication, areas of executables, and many others.

However when you’ve arrange a KernelConfiguration object, that’s all you ever want—for instance to say “the place” to do a distant analysis:

ParallelMap and different parallel capabilities then simply work by doing their computations on kernels specified by an inventory of KernelConfiguration objects. You may arrange the record within the Kernels Settings GUI:

Right here’s my private default assortment of parallel kernels:

This now counts the variety of particular person kernels operating on every machine specified by these configurations:

In Model 13.3 a handy new function is called collections of kernels. For instance, this runs a single “consultant” kernel on every distinct machine:

Simply Name That C Operate! Direct Entry to Exterior Libraries

Let’s say you’ve obtained an exterior library written in C—or in another language that may compile to a C-compatible library. In Model 13.3 there’s now international operate interface (FFI) functionality that means that you can immediately name any operate within the exterior library simply utilizing Wolfram Language code.

Right here’s a really trivial C operate:

This operate occurs to be included in compiled kind within the compilerDemoBase library that’s a part of Wolfram Language documentation. Given this library, you should utilize ForeignFunctionLoad to load the library and create a Wolfram Language operate that immediately calls the C addone operate. All you want do is specify the library and C operate, after which give the sort signature for the operate:

Now ff is a Wolfram Language operate that calls the C addone operate:

The C operate addone occurs to have a very easy kind signature, that may instantly be represented when it comes to compiler sorts which have direct analogs as Wolfram Language expressions. However in working with low-level languages, it’s quite common to should deal immediately with uncooked reminiscence, which is one thing that by no means occurs once you’re purely working on the Wolfram Language stage.

So, for instance, within the OpenSSL library there’s a operate known as RAND_bytes, whose C kind signature is:

And the vital factor to note is that this accommodates a pointer to a buffer buf that will get crammed by RAND_bytes. In case you had been calling RAND_bytes from C, you’d first allocate reminiscence for this buffer, then—after calling RAND_bytes—learn again no matter was written to the buffer. So how are you going to do one thing analogous once you’re calling RAND_bytes utilizing ForeignFunction in Wolfram Language? In Model 13.3 we’re introducing a household of constructs for working with pointers and uncooked reminiscence.

So, for instance, right here’s how we will create a Wolfram Language international operate akin to RAND_bytes:

However to really use this, we’d like to have the ability to allocate the buffer, which in Model 13.3 we will do with RawMemoryAllocate:

This creates a buffer that may retailer 10 unsigned chars. Now we will name rb, giving it this buffer:

rb will fill the buffer—after which we will import the outcomes again into Wolfram Language:

There’s some difficult stuff occurring right here. RawMemoryAllocate does finally allocate uncooked reminiscence—and you’ll see its hex tackle within the symbolic object that’s returned. However RawMemoryAllocate creates a ManagedObject, which retains observe of whether or not it’s being referenced, and routinely frees the reminiscence that’s been allotted when nothing references it anymore.

Way back languages like BASIC offered PEEK and POKE capabilities for studying and writing uncooked reminiscence. It was all the time a harmful factor to do—and it’s nonetheless harmful. But it surely’s considerably larger stage in Wolfram Language, the place in Model 13.3 there at the moment are capabilities like RawMemoryRead and RawMemoryWrite. (For writing knowledge right into a buffer, RawMemoryExport can also be related.)

More often than not it’s very handy to take care of memory-managed ManagedObject constructs. However for the total low-level expertise, Model 13.3 offers UnmanageObject, which disconnects automated reminiscence administration for a managed object, and requires you to explicitly use RawMemoryFree to free it.

One function of C-like languages is the idea of a operate pointer. And usually the operate that the pointer is pointing to is simply one thing like a C operate. However in Model 13.3 there’s one other risk: it may be a operate outlined in Wolfram Language. Or, in different phrases, from inside an exterior C operate it’s attainable to name again into the Wolfram Language.

Let’s use this C program:

You may really compile it proper from Wolfram Language utilizing:

Now we load frun as a international operate—with a sort signature that makes use of "OpaqueRawPointer" to characterize the operate pointer:

What we’d like subsequent is to create a operate pointer that factors to a callback to Wolfram Language:

The Wolfram Language operate right here is simply Echo. However once we name frun with the cbfun operate pointer we will see our C code calling again into Wolfram Language to judge Echo:

ForeignFunctionLoad offers a particularly handy solution to name exterior C-like capabilities immediately from top-level Wolfram Language. However if you happen to’re calling C-like capabilities an awesome many instances, you’ll generally wish to do it utilizing compiled Wolfram Language code. And you are able to do this utilizing the LibraryFunctionDeclaration mechanism that was launched in Model 13.1. It’ll be extra difficult to arrange, and it’ll require an specific compilation step, however there’ll be barely much less “overhead” in calling the exterior capabilities.

The Advance of the Compiler Continues

For a number of years we’ve had an formidable mission to develop a large-scale compiler for the Wolfram Language. And in every successive model we’re additional extending and enhancing the compiler. In Model 13.3 we’ve managed to compile extra of the compiler itself (which, for sure, is written in Wolfram Language)—thereby making the compiler extra environment friendly in compiling code. We’ve additionally enhanced the efficiency of the code generated by the compiler—significantly by optimizing reminiscence administration performed within the compiled code.

Over the previous a number of variations we’ve been steadily making it attainable to compile increasingly of the Wolfram Language. But it surely’ll by no means make sense to compile every thing—and in Model 13.3 we’re including KernelEvaluate to make it extra handy to name again from compiled code to the Wolfram Language kernel.

Right here’s an instance:

We’ve obtained an argument n that’s declared as being of kind MachineInteger. Then we’re doing a computation on n within the kernel, and utilizing TypeHint to specify that its outcome shall be of kind MachineInteger. There’s no less than arithmetic occurring outdoors the KernelEvaluate that may be compiled, although the KernelEvaluate is simply calling uncompiled code:

There are different enhancements to the compiler in Model 13.3 as effectively. For instance, Forged now permits knowledge sorts to be forged in a method that immediately emulates what the C language does. There’s additionally now SequenceType, which is a sort analogous to the Wolfram Language Sequence assemble—and in a position to characterize an arbitrary-length sequence of arguments to a operate.

And A lot Extra…

Along with every thing we’ve already mentioned right here, there are many different updates and enhancements in Model 13.3—in addition to hundreds of bug fixes.

A few of the additions fill out corners of performance, including completeness or consistency. Statistical becoming capabilities like LinearModelFit now settle for enter in all numerous affiliation and many others. types that machine studying capabilities like Classify settle for. TourVideo now allows you to “tour” GeoGraphics, with waypoints specified by geo positions. ByteArray now helps the “nook case” of zero-length byte arrays. The compiler can now deal with byte array capabilities, and extra string capabilities. Almost 40 extra particular capabilities can now deal with numeric interval computations. BarcodeImage provides help for UPCE and Code93 barcodes. SolidMechanicsPDEComponent provides help for the Yeoh hyperelastic mannequin. And—twenty years after we first launched export of SVG, there’s now built-in help for import of SVG not solely to raster graphics, but additionally to vector graphics.

There are new “utility” capabilities like RealValuedNumberQ and RealValuedNumericQ. There’s a brand new operate FindImageShapes that begins the method of systematically discovering geometrical types in photos. There are a variety of recent knowledge constructions—like "SortedKeyStore" and "CuckooFilter".

There are additionally capabilities whose algorithms—and output—have been improved. ImageSaliencyFilter now makes use of new machine-learning-based strategies. RSolveValue offers cleaner and smaller outcomes for the vital case of linear distinction equations with fixed coefficients.

[ad_2]